Schema Driven Art

We turned our brand identity into a machine-readable spec because robots don't have taste

We're in the middle of a rebrand at Logic. Part of that work has included publishing a flagship guide: a deep dive on how to build an ai agent in the current technical landscape. We spent dozens of hours putting this guide together.

In order to make the guide sing, we wanted to pair it with quality, curated, editorial imagery. We didn’t want stock photos or flavorless slop gradients. We needed a cohesive visual series that felt intentional, authored, and unmistakably ours.

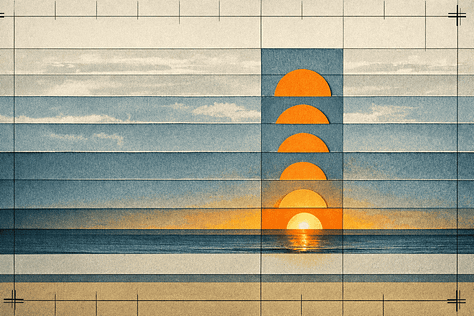

Image generation is a fickle process. It’s easy to ask ChatGPT to make an image of a collage of a sun rising over the sea. What we get back is the continental breakfast buffet of visual art. It’s food, yes, but nobody’s recommending the scrambled eggs.

We can get more specific in our prompt to steer the model and hope for something closer to distinctive brand-aware asset: Make a collage of the sun rising over the sea. It starts at the horizon as a bright hot orange dot then transforms into an orange circle of cut paper. There are 8 suns rising up through strips of torn paper intermingling to make the sea and the sky. A sense of calm wonder should dominate the scene, as though we’re entering the dawn of a new, unknown landscape.

Better insofar as the LLM followed most of the instructions. But we’re still pressing up against some baked in assumptions by the model that we need to control. Everything we don’t tell the model to do, it infers through probabilities. If we clear the context and feed the same prompt in without any strong steering, there’s no guarantee we’ll get consistent results: colors will drift, compositions will not cohere, lighting will change. We had a folder full of uncanny orphans that all shared the same synthetic aftertaste.

There’s a deeper problem beyond consistency, though: taste. At least for now, LLMs have no sense of taste. But they are very good at interpreting instructions, and they really like JSON.

From Moodboard to Spec

The hard part isn’t understanding the need for a spec; it’s writing one. You know what you like when you see it, but translating that gut feeling into a spec requires a different kind of precision.

We started where any designer starts: the moodboard. We pulled references to capture sensibilities. We collected lighting quality, paper texture, color temperature, and the specific way shadows fall when materials meet. We gathered raw aesthetic data.

Then, we handed that data to the model and asked it to write a schema it could understand for creating related images.

This works because the vocabulary an LLM uses to describe visual quality is the same vocabulary it respects when generating it. By using the model as a translator for itself, we bridge the gap between human taste and agentic interpretation. Our eyes provide the input; the schema provides the output.

The first pass is never perfect. We ran a series of generations, scrutinized the failures, and tuned the knobs. A forbidden list is effectively a graveyard for our design mistakes. “Glossy” earned its spot after the first three outputs arrived with that synthetic sheen. “Neon” was banned the moment our hero orange went radioactive. Each failure sharpened the spec.

After a few rounds of iteration, we landed on a style_capsule that encoded our taste. We curated the model’s behavior until the results stopped feeling like an approximation and started feeling like the output of a proper design system.

Brand Guides, Compiled

Every serious brand has a guide that dictates color, typography, and tone. It doesn’t tell a designer what to make so much as defines how the what should feel. Identity is locked. Content is malleable.

LLMs need the same guardrails, but they need them machine-readable. Prompt engineering is the wrong metaphor for this work. What we needed was brand engineering. We started treating the AI not as a creative partner that needs “inspiration” but as a render engine that requires a strict configuration file.

Anatomy of an Image Spec

We built a schema called CBS, Comprehensive Brand Styles. Its primary mandate is singular: style is frozen, scene is free.

root

├── schema_version / project ← identity

├── output ← aspect ratio

├── style_capsule ← aesthetic DNA

│ ├── medium / finish / texture

│ ├── lighting

│ ├── composition_rules

│ └── forbidden[]

├── scene ← the VARIABLE layer

│ ├── camera

│ ├── composition

│ └── motifs

├── color_system ← palette + roles

└── render_instructions ← template flags

Everything outside the scene block is immutable. It dictates the medium (mixed-media analog collage), the lighting (low winter side light), and the finish (matte, visible cut-paper edges). The forbidden array acts as a deny list for an LLM’s probabilistic flights of fancy.

Inside the scene block, we define a concept. Because the style is locked, and because we try to clamp down as many decisions as possible, we’re able to encourage models to execute to the spec.

Why Structure Beats Prose

The mechanical advantage of a schema over prose comes down to three things.

Semantic Decomposition: A prose prompt like “bauhaus-inspired collage” is still too loose. How many bands? What lighting angle? The model fills the gaps with its own probability distribution. A spec pins these degrees of freedom by using precise language to break bauhaus into descriptive sub-components. There is nothing left to guess.

Hierarchical Specificity: Modern image models, whether they rely on iterative diffusion processes to sculpt noise1 or autoregressive logic2 to construct pixels, all respond better to structured, hierarchical constraints than to a well-meaning, but imprecise paragraph-long prose prompt.

Quantified Constraints: Numbers beat vibes. band_count: 9 collapses the many possibilities that “several bands” or even “9 bands” leaves open3. By naming each color’s role and capping accent usage, the spec ensures every generation is coherent.

Taste as an Engineering Problem

Most prompt advice ignores the model’s inherent gravitational pull. Left to their own devices, image models gravitate toward a specific aesthetic: slick, dimensionless, hyper-saturated. It is the visual equivalent of an LLM’s tendency to write in a helpful, enthusiastic tone. It is the “average” across the training distribution. The continental breakfast, if you will.

The spec fights this by defining a style that is specifically not that. Every property in the style_capsule is a deliberate departure.

medium: “mixed-media analog photography hybrid collage”—this forces the model to mimic a physical process, moving away from purely digital noise.texture.notes: [”fine film grain”, “subtle paper creases”, “slightly washed blacks”]—these are the artifacts of analog media. They break the synthetic smoothness that signals “generated.”finish: [”matte”, “clean edges”]—the matte finish kills the default gloss. The cut-paper edges introduce handmade geometry that feels purposeful.shadow_character: “crisp long shadows”—models have a tendency to default to flat ambient lighting. By specifying directional, moody light, we force depth.

This is an intentional overcorrection. We are defining an aesthetic that the model wouldn’t produce by accident. That is why the output feels authored.

The Series

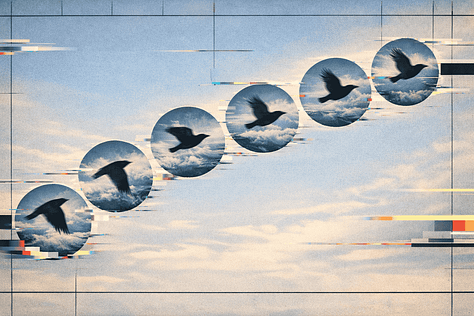

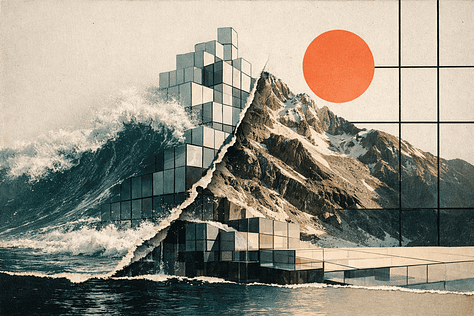

The editorial images in our agent-building guide were generated using this schema. The only thing that changed between images was the scene block.

Laid side-by-side, they read as a series. Each image is distinct, but they share a lineage. The reliability from the schema is striking.

Identity as Infrastructure

Identity is a kind of infrastructure. When we treat it as a set of hard constraints rather than a list of adjectives, the output stops looking uninspired and starts looking like intention. We didn’t invent anything new. We just wrote down time tested design rules in a manner that robots appreciate.

ChatGPT’s latest image models

A large portion of LLMs data corpus is code and they really like it when you structure your prompts to feel encoded

love this idea. I think people are still doing first order thinking on this — “how do i prompt an LLM to get what i want” rather than “how do i get it to build a repeatable blueprint of what i want, in THEIR language”

not easy to rationalize the upsides about this its slow at the initial experimentation but pays compounding dividends

thanks for sharing!