The Unbearable Weight of Massive Pull Requests

Agents are producing lots of code. How do reviewers keep up?

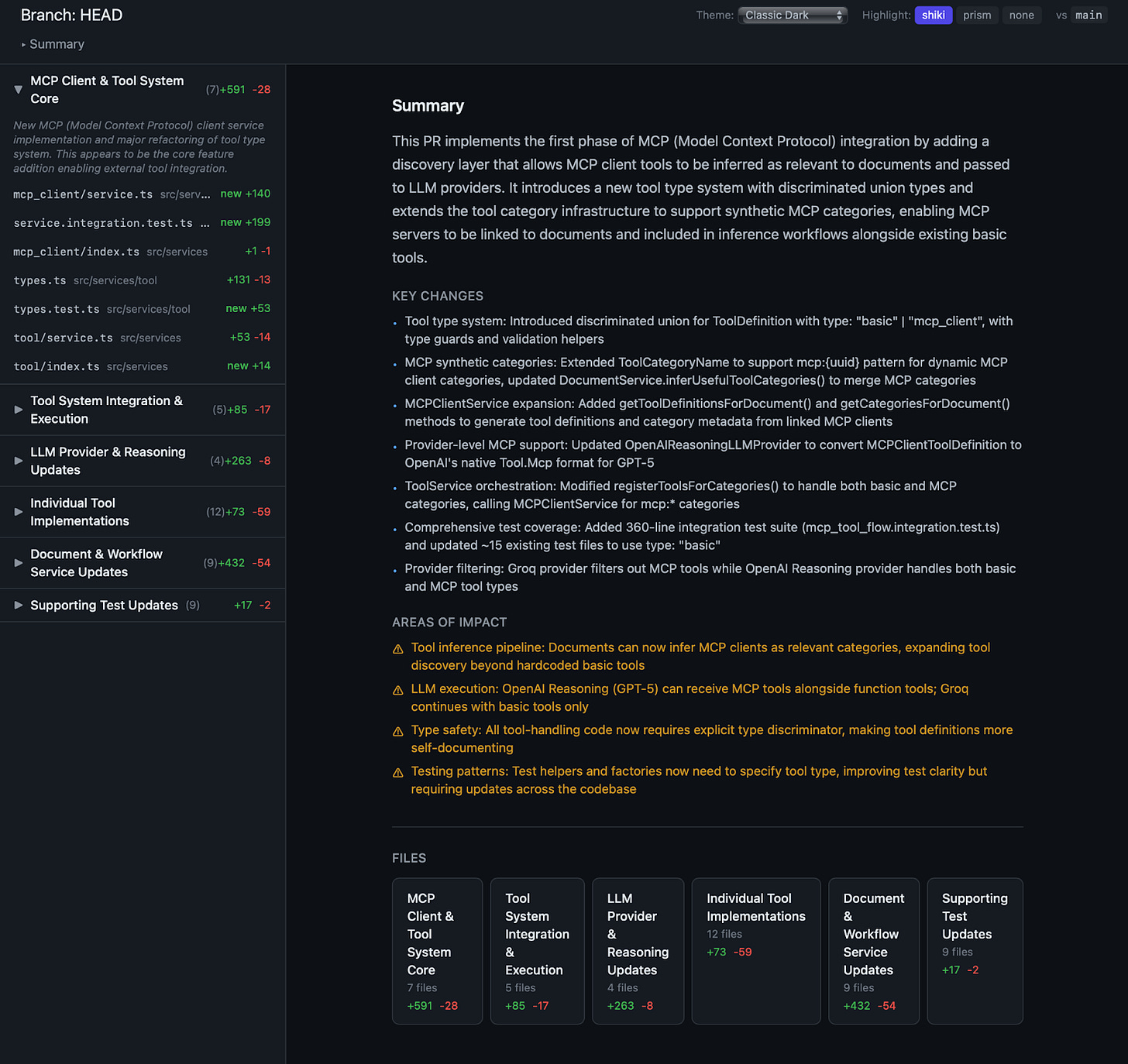

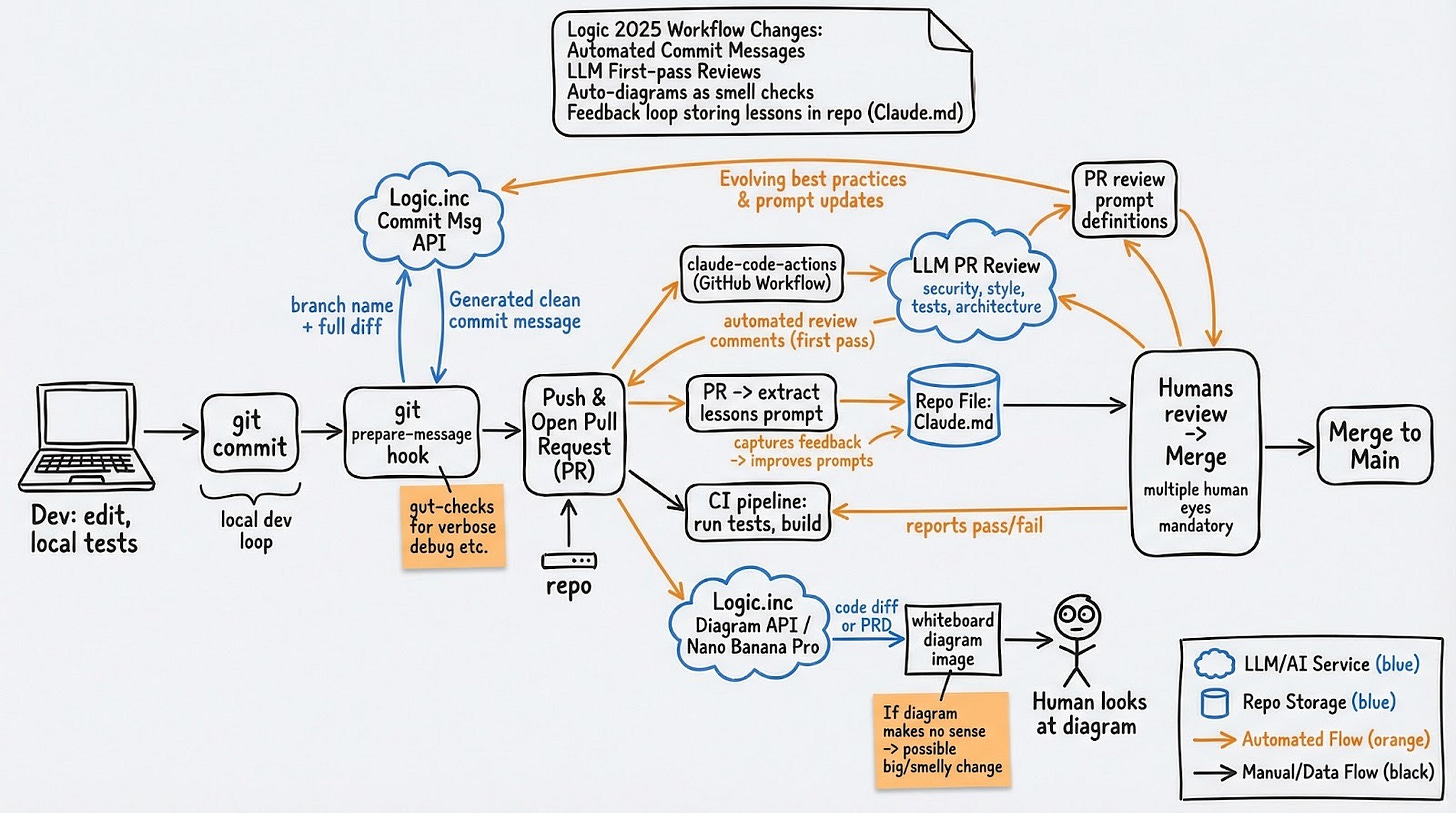

Last month I was asked to review the code for the new version of our agent arena. It included two new models, each producing their own output for 53 distinct app prompts. So the PR included 107 files changed, and over 114,000 new lines of code.

As I’ve done thousands of times in my career, I opened our standard code review UI. The files were too large to render inline: each one had to be clicked and loaded individually. The browser couldn’t actually handle it all. I was staring at a code review… that I physically could not review.

While I know the agent arena doesn’t carry the same risk profile as our production API, I still care about quality and reliability, so I’m not ready to approve a PR without reading every line.

The bottleneck is shifting

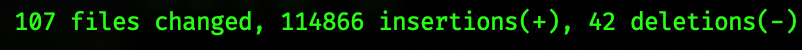

Here at logic.inc, we’re no strangers to agentic coding. We have bee hives producing a lot of honey, and are actively experimenting on coordination methods for all our agents.

We’re a software company with SOC2 and HIPAA obligations, so writing code is only part of the job. It also needs to be reviewed, built, deployed and monitored. Regardless of how code gets created, if it’s headed to production, at least two humans look at it. When my agents write the code, I’m the first reviewer. I don’t send it out until I’m satisfied, and after I’ve done that, a teammate can review and approve. This maintains our quality bar, supports our compliance posture, and keeps knowledge distributed across the team.

The problem is that while the process for producing code has changed over the last 1-2 years, the process for reviewing it has not.

Thanks to Amdahl’s law, speeding up one part of a pipeline doesn’t necessarily speed up the whole pipeline. It can just shift the bottleneck. As code production has gotten faster, review has grown to represent a larger portion of the total cycle time. If you can produce in an afternoon what used to take two weeks, the single day you spend reviewing it is now a major constraint on the critical path.

What’s actually broken

The standard code review UI is a list of file diffs sorted alphabetically with a description at the top. This has always had problems. Alphabetical ordering forces the reviewer to build cognitive context from scratch, jumping between files based on filename rather than relevance. A change to a database migration sits next to a change to a CSS file because “d” comes before “s.” The reviewer has to hold the full picture in their head with no help from the tool.

That was tolerable when a PR had 15 files, but it really breaks down at 107.

What I needed

A review tool that doesn’t melt. Honestly, even just rendering large file diffs locally without choking the browser would be an improvement over what I had.

Semantic grouping. Files organized by how they contribute to the feature, not by the alphabet. This kind of grouping was impossible to automate before LLMs, because it requires understanding intent, not just parsing syntax - but it is very much possible now.

Automated specialist analysis. I wanted separate agents, each focused on a specific dimension of the code (security, best practices, consistency), running in parallel to catch what I’d miss on a solo pass through 114k lines.

Building Prism

I started at the end of last year with a Logic Doc and a GitHub Action that turns a code diff into a whiteboard diagram. This was useful for conveying the shape of a change at a glance, but still not enough for the full review process.

Prism came out of that 107-file pull request. I initially spent about 30 minutes vibe-coding and had v0.1: a tool that takes a git branch, analyzes the diff, and gives me three things:

Semantic file grouping. An LLM classifies each changed file by its role in the feature and groups them so I can walk through the review in cognitive order. Database changes together. API changes together. Test scaffolding together. Formatting-only changes in their own group at the bottom.

Specialist agent reviews. A set of focused agents that each review the diff through a specific lens: security audit, best practice violations, consistency checks. Each one produces findings independently.

A fast, syntax-highlighted diff viewer. Served locally, loads instantly, scrolls without lag. No waiting for a browser to choke on a 2,000-line file.

That first version got me through the arena review. On subsequent PRs, it’s been consistently useful in ways I didn’t anticipate. It groups unimportant formatting changes away from the real feature work, which alone saves significant review time. It flags areas of concern I would have scrolled past. And I haven’t even built most of the specialist agents I want: frontend review, database schema analysis, dependency auditing, test coverage gaps.

The workflow now is two commands: prism fetch <pr url> and prism run. It pulls the diff, groups it, runs the analyses, and serves up the result. Reviews happen faster without cutting corners on how carefully the code gets examined.

This problem is going to get worse

The ratio of time spent producing code to time spent reviewing code is only going in one direction. Every team adopting agentic coding tools will eventually hit the same wall in some capacity: output that exceeds what their review process can absorb.

The answer can’t be to just “use AI to review AI code.” Automated review helps, but compliance, knowledge sharing, and quality judgment still require humans in the loop. And so I think one potential source of real opportunity is in the tooling between generation and approval: specifically tools that offer smarter grouping, focused analysis, better rendering. All this is the plumbing that makes human review scale alongside agent-generated output.

Prism isn’t ready to release publically yet, and, honestly, maybe it never will be. But the ideas behind it took ~30 minutes to prototype and have already changed how I review code. If your PRs are getting bigger and your review tools haven’t changed, the gap is only going to widen. I’d recommend giving a tool like this some consideration.

Are you planning sharing Prism in the future?